Analysis

AI and Accountancy: Evolution or Elimination? Here’s What the Data Tells Us

Will AI replace accountants? Explore what 2026 data on AI in accounting reveals about job growth, productivity gains, skill shifts, and the future of the profession globally.

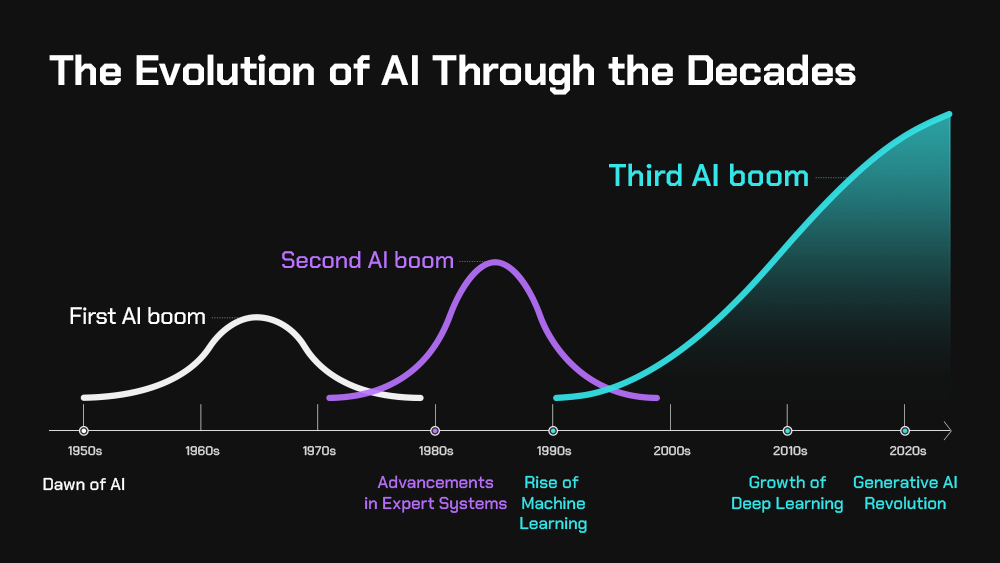

Whenever a new wave of technology emerges, the same question follows: Will this replace jobs? With artificial intelligence (AI), that question feels more urgent. AI can scan thousands of transactions in seconds. It can detect patterns humans might miss. Understandably, people are asking whether accountants, especially junior ones, will become obsolete. From the lens of the Institute of Singapore Chartered Accountants (ISCA), that is not where the profession is heading.

But ISCA is not alone in that assessment. A growing body of research — from MIT, Stanford, and the world’s largest professional services firms — suggests that AI in accounting is not a termination notice. It is, in many respects, an upgrade. The more important question isn’t whether AI will eliminate accountants. It’s whether accountants who embrace AI will outcompete those who don’t.

That distinction matters enormously, and the data makes it clearer than ever.

How AI in Accounting Is Already Reshaping Productivity

Before we assess the human cost, we must first understand the scale of AI’s operational impact. The numbers are striking.

The global AI accounting market was valued at approximately $10.87 billion as of recent estimates by DualEntry, with projections placing that figure significantly higher through the end of this decade. AI-powered tools are now embedded in audit workflows, tax compliance engines, accounts payable automation, and real-time financial forecasting. What once required a team of analysts for three days can now be completed in hours — sometimes minutes.

Stanford Graduate School of Business research on AI-assisted professional workflows found productivity gains of roughly 12% in financial reporting accuracy and speed when AI tools were deployed alongside skilled professionals. This is not about replacing human judgment; it is about amplifying it. The model that emerges from this data is collaborative, not competitive.

Deloitte’s most recent AI report reveals that worker access to AI tools has increased by 50% in a single year, marking a tectonic shift in how firms onboard, train, and deploy talent. Tasks that were once the bread and butter of entry-level accountants — reconciliations, data entry, variance analysis — are being automated at scale. But this is not inherently a loss. As Deloitte’s research notes, automation of routine tasks frees higher-order cognitive capacity for advisory work, risk analysis, and strategic counsel — functions where human accountants remain irreplaceable.

AI Impact on Accounting Jobs: Reshaping, Not Replacing

Here is where the nuance becomes critical — and where much of the public discourse gets it wrong.

The United States Bureau of Labor Statistics (BLS), as cited by Careery.pro, projects 5% job growth for accountants and auditors through 2034, which sits comfortably at the average growth rate for all occupations. That is not the trajectory of a dying profession. That is the trajectory of a profession in transformation.

Consider what that transformation looks like at ground level:

- Routine compliance tasks (data entry, invoice matching, basic reconciliations) — increasingly automated

- Tax preparation for standard cases — largely handled by AI platforms with minimal human intervention

- Audit sampling and anomaly detection — AI outperforms human-only review in both speed and pattern recognition

- Advisory services, forensic accounting, M&A due diligence, ESG reporting — growing in complexity and demand

- AI governance and compliance oversight — an entirely new category of roles that did not exist five years ago

Gartner’s research on finance function transformation supports this picture, projecting that by the late 2020s, finance departments will dedicate a larger share of resources to insight generation and strategic planning than to transactional processing. AI handles the transaction layer. Humans own the insight layer.

The AI impact on accounting jobs, in other words, is not mass unemployment. It is mass redeployment — upward, toward more complex and more valued work.

Wages, Inequality, and the Premium on AI Fluency

Not all accountants will benefit equally. The data on wage dynamics carries an important warning.

PwC’s 2025 Global AI Jobs Barometer found that industries with higher AI exposure are experiencing wage growth approximately two times faster than sectors with low AI exposure. For accountants, the implication is stark: professionals who develop AI fluency command a growing wage premium, while those who resist upskilling risk being left behind — not by AI directly, but by AI-proficient peers.

This creates a bifurcation within the profession. On one end: accountants who use AI as a force multiplier, taking on higher-complexity work, billing more hours at higher rates, and expanding their advisory scope. On the other: accountants who remain anchored to task-based roles that AI can increasingly replicate at a fraction of the cost.

The signal for professionals is unambiguous. AI fluency is no longer a differentiator. In the context of AI in accountancy in 2026, it is quickly becoming table stakes.

Thomson Reuters’ Institute research on the future of professional services echoes this clearly: firms that invest in AI tools alongside human capital development are seeing measurably better client outcomes, stronger retention, and faster revenue growth than those that deploy AI without an accompanying talent strategy. Technology alone is not the answer. Technology combined with skilled human judgment is.

A Global Lens: Singapore, Asia, and the ISCA Perspective

The conversation around AI in accounting is not uniform across geographies. Different regulatory environments, economic structures, and labor markets produce different outcomes — and some of the most instructive cases are emerging from Asia.

Singapore offers a particularly compelling study. ISCA, which represents the country’s chartered accounting profession, has been among the more forward-thinking bodies globally when it comes to AI adoption frameworks. In a landmark study on AI readiness, ISCA found that 85% of accounting professionals expressed willingness to adopt AI tools in their workflows — a figure that reflects both the pragmatism of Singapore’s professional culture and the effectiveness of ISCA’s ongoing education and advocacy programs.

This contrasts with more hesitant adoption curves in parts of Europe and North America, where regulatory ambiguity around AI in audit and compliance has slowed institutional uptake. Singapore’s Accounting and Corporate Regulatory Authority (ACRA) has worked in tandem with ISCA to create a structured but enabling environment for AI deployment in financial services — a model that other jurisdictions are beginning to study carefully.

In the broader Asia-Pacific context, the MIT Sloan Management Review has highlighted that Asian markets are experiencing faster AI adoption in finance functions partly because of newer digital infrastructure and a younger workforce with higher baseline digital fluency. China, South Korea, and Singapore are all investing heavily in AI-driven audit and tax technology, creating competitive pressure on Western accounting firms to accelerate their own integration strategies.

For accounting professionals in the region, this is an opportunity. The firms and individuals that move earliest and most strategically will define what AI reshaping accounting roles looks like in practice — building the playbooks that the rest of the world will eventually follow.

The Future of Accounting with AI: New Roles, New Skills, New Demands

What, concretely, does the future of accounting with AI look like? Several emerging roles are already moving from concept to job posting.

AI Compliance Officers sit at the intersection of accounting expertise and AI governance. As regulators in the EU, US, and Southeast Asia begin requiring auditable AI decision trails for financial systems, firms need professionals who understand both the technical logic of AI models and the compliance implications of their outputs. This is fundamentally an accounting role — but one that demands literacy in data science and machine learning fundamentals.

Forensic AI Auditors are being deployed to assess whether AI systems used in financial reporting are producing accurate, unbiased, and regulatorily compliant outputs. Traditional forensic accounting skills — pattern recognition, investigative rigor, understanding of fraud typologies — translate well. But new capabilities in model interpretability and algorithmic bias detection are increasingly required alongside them.

Sustainability and ESG Reporting Strategists are in surging demand as public companies face tightening mandatory disclosure requirements across multiple jurisdictions. AI can process enormous volumes of supply chain, emissions, and social impact data — but the synthesis, stakeholder communication, and assurance of that data requires seasoned professional judgment that no model can yet replicate.

Chief AI Finance Officers (CAFOs) — a title beginning to appear in technology-forward organizations — blend traditional CFO responsibilities with deep fluency in AI strategy, data architecture, and automation governance. These roles command premium compensation and are likely to multiply rapidly through the rest of the decade.

The skills needed to thrive in these roles are not radically foreign to accountants. Critical thinking, professional skepticism, regulatory knowledge, and communication are already foundational. What changes is the technological overlay: data literacy, prompt engineering, understanding of machine learning outputs, and the ability to evaluate AI-generated analyses with the same rigor previously applied to human-generated ones.

The Bottom Line: Evolution Is Not Optional

The data, viewed in aggregate, tells a coherent and ultimately optimistic story — but one with a clear condition attached.

AI in accounting is not an elimination event. It is an evolution imperative.

Will AI replace accountants? The evidence says no — but it will absolutely replace accountants who fail to evolve. The profession will not shrink; it will shift. The accountants who will struggle are not those facing AI directly. They are those who underestimate AI’s scope, delay adaptation, and cede ground to peers who are moving faster.

The 5% BLS job growth projection, the 85% ISCA adoption willingness rate, the 2x wage premium for AI-exposed industries — these are not contradictory data points. They form a consistent picture of a profession that is growing in value precisely because its most capable practitioners are using AI to do more, better, faster.

ISCA frames this correctly: the destination is not obsolescence. It is elevation. The accountant of 2030 will not be competing with AI. They will be wielding it — as a diagnostic tool, a compliance engine, a risk detector, and a strategic advisor’s most powerful instrument.

For professionals in the field, the call to action is not complicated. Upskill now. Engage with AI tools at the practice level, not merely in theory. Seek out certifications in data analytics and AI governance. Participate in professional bodies — like ISCA — that are building the frameworks and networks to help members navigate this transition with confidence.

The wave is already here. The question is not whether it will change the profession. It already has. The question now is who will ride it — and who will be left standing on the shore.

Discover more from The Economy

Subscribe to get the latest posts sent to your email.

Analysis

China Export Controls 2026: How Middle East Turmoil Is Slowing Beijing’s Trade Power Play

China’s export controls on rare earths, tungsten, and silver are tightening fast in 2026 — but the Iran war and Hormuz chaos are already denting Beijing’s export engine. A deep analysis.

Picture the view from the Yangshan Deep-Water Port on a clear March morning: cranes moving in hypnotic rhythm, container ships stacked eight stories high, the smell of diesel and ambition mingling in the salt air. Shanghai, the world’s busiest port, has long been a monument to China’s export supremacy. Now picture, simultaneously, the Strait of Hormuz some 5,000 kilometres to the west — tankers at anchor, shipping lanes in disarray, insurance premiums spiking by the hour after a war nobody fully predicted has turned one of the world’s most critical energy arteries into a geopolitical chokepoint.

These two scenes, unfolding in real time, define the central paradox of Chinese trade power in 2026. Beijing is weaponising export controls more aggressively than at any point in its modern economic history — tightening its grip on rare earths, tungsten, antimony, and silver with the confidence of a player who believes it holds all the cards. Yet the very global instability it once navigated with deftness is now biting back, slowing China’s export engine at precisely the moment when export-led growth is not a preference but a lifeline. The March customs data, released today, made that contradiction impossible to ignore.

Why China’s Export Controls Are Soaring in 2026

To understand Beijing’s export-control blitz, you have to understand its logic: supply-chain chokepoints are the new artillery. China does not need aircraft carriers to coerce its rivals when it controls roughly 80% of global tungsten production, dominates rare earth refining at a rate that makes Western alternatives fanciful for years to come, and now holds the licensing key for silver — a metal the United States only formally designated as a “critical mineral” in November 2025.

The architecture assembled by China’s Ministry of Commerce (MOFCOM) since 2023 has grown into something qualitatively different from its earlier, blunter instruments. MOFCOM’s December 2025 notification established state-controlled whitelists for tungsten, antimony, and silver exports covering 2026 and 2027: just 15 companies approved for tungsten, 11 for antimony, and 44 for silver. The designation is the most restrictive tier in China’s export-control hierarchy. Companies are selected first; export volumes managed second. Unlike rare earths — still governed by case-by-case licensing — these three metals now flow through a fixed exporter system that operates, in effect, as a state faucet. Beijing can tighten or loosen at will.

The EU Chamber of Commerce in China captured the alarm among multinationals: a flash survey of members in November found that a majority of respondents had been or expected to be affected by China’s expanding controls. Silver’s elevation to strategic material status — placing it on the same regulatory footing as rare earths — was particularly striking. Its uses span electronics, solar cells, and defense systems. Every one of those sectors is a pressure point in the U.S.-China technological rivalry.

The Rare Earth Détente Is More Theatrical Than Real

On the surface, October 2025 looked like a moment of diplomatic breakthrough. Following the Xi-Trump summit, China announced the suspension of its sweeping new rare-earth export controls — specifically, MOFCOM Announcements No. 70 and No. 72 — pausing both the October rare-earth restrictions and U.S.-specific dual-use licensing requirements until November 2026. Trump declared it a victory. Markets exhaled.

But look beneath the headline and the architecture is entirely intact. China’s addition of seven medium- and heavy-rare-earth elements — samarium, gadolinium, terbium, dysprosium, lutetium, scandium, and yttrium — to its Dual-Use Items Control List under Announcement 18 (2025) was never suspended. Neither were the earlier 2025 controls on tungsten, tellurium, bismuth, molybdenum, and indium. Most consequentially, the extraterritorial provisions — the so-called “50% rule,” which requires export licenses for products made outside China if they contain Chinese-origin materials or were produced using Chinese technologies — remain a live wire running through global semiconductor and battery supply chains.

The pause, in short, is not a retreat. It is a recalibration, a strategic exhale before the next tightening cycle. As legal analysts at Clark Hill put it plainly: expect regulatory tightening to return in late 2026 if bilateral conditions deteriorate. Beijing has merely exchanged a sprinting pace for a walking one, keeping its destination unchanged.

The Middle East Wild Card Crushing China’s Export Momentum

Then came February 28, 2026, and everything changed.

U.S. and Israeli strikes on Iran triggered a war that rapidly scrambled the assumptions underpinning China’s export-led growth model. The Strait of Hormuz — through which roughly 20% of global oil trade and a comparable share of LNG normally transits — effectively seized up. Commercial tankers chose not to risk passage. Before the war, China received approximately 5.35 million barrels of oil per day via the Strait of Hormuz. That figure collapsed to around 1.22 million barrels, coming exclusively from Iranian tankers — a reduction of nearly 77%.

For a country in which, as Henry Tugendhat of the Washington Institute for Near East Policy notes, “Hormuz remains China’s main concern, because about 45% of its oil imports pass through it,” this was not an abstraction. It was an immediate, visceral shock to the manufacturing cost base. Chinese refineries began reducing operating rates or accelerating maintenance schedules to avoid buying expensive crude. Energy-intensive sectors — steel, petrochemicals, cement — felt it first. But the ripple spread fast into the broader export machine.

The March customs data, released this morning, confirmed what economists had been dreading. China’s export growth slowed to just 2.5% year-on-year in March — a five-month low, and a stunning collapse from the 21.8% surge recorded in January and February. Analysts polled by Reuters had forecast growth of 8.3%. The actual print was less than a third of that. Outbound shipments, which just eight weeks ago were on pace to eclipse last year’s record $1.2 trillion trade surplus, stumbled badly in the first full month of the Iran war.

Rare Earths, Tungsten, and the New Geopolitical Chessboard

The cruel irony of China’s position in 2026 is not lost on Beijing’s economic planners. The country has spent the better part of three years engineering the most sophisticated export-control system in its history, designed to maximise geopolitical leverage while maintaining the appearance of regulatory normalcy. And yet the very global disorder that its strategists once viewed as fertile ground for expanding influence — American overreach, Middle East fragility, European energy dependence — is now delivering body blows to the export revenues that fuel the domestic economy.

Consider the arithmetic. Tungsten exports fell 13.75% year-on-year in the first nine months of 2025, even before the new whitelist took effect. That decline predated the Iran war’s disruptions; it reflected global demand softness and supply-chain reconfiguration by Western buyers accelerating their diversification efforts. Now, with input price inflation for Chinese manufacturers surging to its highest level since March 2022 — and output price inflation hitting a four-year peak, according to the RatingDog/S&P Global PMI — the cost pressure is compounding.

The official manufacturing PMI rebounded to 50.4 in March from 49.0 in February, the strongest reading in twelve months, which offered some comfort. But the private-sector RatingDog PMI told a more honest story: it fell to 50.8 from a five-year high of 52.1 in February. The new export orders sub-index — the most forward-looking indicator of actual foreign demand — remained in contraction at 49.1. The headline may read expansion, but the pipeline is thinning.

How the Iran War Is Rewiring China’s Export Map

The geographic breakdown of March’s trade data illuminates the structural shifts now underway. China’s exports to the United States plunged 26.5% year-on-year in March, a widening from the 11% drop recorded in January and February — a deterioration driven by Trump’s elevated tariffs, which have progressively choked off one of China’s most lucrative markets. EU-bound shipments rose 8.6% and Southeast Asian exports climbed 6.9%, reflecting Beijing’s deliberate pivot toward trade diversification as Washington weaponises its own levers.

But the Middle East — once a growing destination for Chinese machinery, electronics, and manufactured goods — is now a graveyard of cancelled orders. As the Asian Development Bank and TIME have documented, Middle East buyers have abruptly halted purchases amid maritime uncertainty. Jebel Ali Port in Dubai, one of the world’s busiest container terminals, suspended operations following drone strikes, according to the Financial Times. Thai rice, Indian agricultural goods, and Chinese consumer electronics are all sitting in holding patterns at Asian ports, waiting for a maritime corridor that no longer reliably exists.

For Chinese exporters, the calculus has turned grim in ways that few were modelling at the start of 2026. Freight forwarders warned in early March of extended transit times, irregular schedules, and significant rate increases as carriers suspended Middle East operations. Shipping insurance premiums have spiked to levels not seen since the peak of the Red Sea crisis. “China’s exports have decelerated as the Iran war starts to affect global demand and supply chains,” said Gary Ng, senior Asia Pacific economist at Natixis. Bank of America economists led by Helen Qiao have similarly warned that the risks will “arise from a persistent global slowdown in overall demand if the conflict lasts longer than currently expected.”

Beijing’s Growth Target and the Export Dependency Trap

Against this backdrop, China’s leaders have set a 2026 growth target of 4.5% to 5% — the lowest since 1991. That target was already cautious before February 28. Now it carries an asterisk the size of the Hormuz strait.

The underlying problem is structural, and the Iran war has merely accelerated its visibility. China’s domestic consumption engine remains badly misfiring. A years-long property sector slump has wiped out household wealth, dampened consumer confidence, and created the deflationary undertow that has haunted Chinese factory margins for much of the past two years. Exports were never merely a growth strategy; they became a substitute for the domestic demand rebalancing that successive Five-Year Plans promised but never delivered at scale.

The 15th Five-Year Plan (2026-2030), formalised at the National People’s Congress in March, commits again to shifting the growth engine toward domestic consumption. But rebalancing is a decade-long project at minimum, and as Dan Wang of Eurasia Group observed acutely, “exports and PMI may face risks in the second half of the year, as the Iranian issue could lead to a recession in major economies, especially the EU, which is China’s most important trading destination.”

That is the existential tension at the heart of Beijing’s 2026 economic calendar: the export controls project Chinese strength, but the export slowdown reveals Chinese fragility. The two narratives are not separate stories — they are the same story, told from opposite ends of the supply chain.

What This Means for Global Supply Chains and Western Strategy

For Western governments and businesses, the lessons of the first four months of 2026 are stark and should concentrate minds.

First, the “pause” in China’s rare-earth controls should not be mistaken for a strategic retreat. Diversification timelines for rare earth processing remain measured in years, not quarters. Australia’s Lynas Rare Earths, the largest producer of separated rare earths outside China, still sends oxides to China for refining. Australia is not expected to achieve full refining independence until well beyond 2026. The whitelist architecture for tungsten, antimony, and silver means that even if rare-earth licensing eases temporarily, the mineral chokepoints are multiplying rather than narrowing.

Second, the 45-day license review window for controlled materials is itself a weapon of strategic delay. As one analyst put it dryly: “delay is the new denial.” A manufacturer in Germany or Japan requiring controlled tungsten for defence production cannot absorb a 45-day uncertainty in its supply chain indefinitely. The bureaucratic friction is by design.

Third, China’s pivot to Europe and Southeast Asia as export markets — while strategically sound as a hedge against U.S. tariff pressure — is directly threatened by the Iran war’s energy shock. The ING macro team’s analysis is unsparing: if higher energy prices and shipping disruptions persist or worsen, pressure will build materially in the months ahead.

For Western policymakers, the playbook should be clear even if execution remains painful. The U.S. Project Vault — a $12 billion strategic critical minerals reserve backed by Export-Import Bank financing — is a necessary if belated step. A formal “critical minerals club” among allies, which the U.S. Trade Representative floated for public comment in early 2026, would accelerate diversification by pooling demand signals and investment capital across democratic market economies. Europe needs to move faster on processing capacity: consuming 40% of the world’s critical minerals while refining almost none of them is a strategic liability that no amount of diplomatic finesse can paper over.

For businesses, the message is harsher: any supply chain that remains single-source dependent on China for controlled materials in 2026 is operating on borrowed time and borrowed luck. “Diversification is no longer optional,” as one industry analyst noted simply. “Delay is the new denial.”

What Happens Next: The 2026–2027 Outlook

The trajectory for the remainder of 2026 hinges on two variables: how quickly the Iran war de-escalates (or doesn’t), and whether the U.S.-China diplomatic channel holds open enough to prevent the re-imposition of the suspended export controls.

On the first variable, Trump’s planned May visit to Beijing — already delayed once by the war — will be the most closely watched diplomatic event of the year. The meeting carries enormous stakes: a visible détente could stabilise the trade outlook for H2 2026, rebuild business confidence, and give China the export recovery that its growth target demands. A collapse in negotiations, or a military escalation in the Gulf that outlasts Beijing’s ability to manage its energy shock, could push China’s growth below the 4.5% floor in ways that create serious domestic political pressure.

On the second, MOFCOM Announcement 70’s suspension expires in November 2026. If the bilateral atmosphere deteriorates — and there are many ways it could, from Taiwan tensions to semiconductor export controls to Beijing’s domestic AI chip ban — the rare-earth controls will return, and likely in a more comprehensive form than before. Companies that used the pause to secure long-term general licenses and diversify supply are buying genuine resilience. Those who treated the pause as a return to normalcy are setting themselves up for a very difficult winter.

The deeper truth is that China’s export-control strategy and the Middle East disruption are not simply colliding forces — they are revealing the same underlying fact: the globalisation that Beijing and Washington both profited from for forty years is over. What has replaced it is a managed fragmentation, in which every mineral shipment, every shipping lane, and every license review is a move in a game with no agreed rules and no obvious endgame.

Standing in Yangshan port and watching the cranes, one is tempted to conclude that China still holds structural advantages that no single war or tariff can dissolve. Its dominance in green technology manufacturing — solar panels, batteries, electric vehicles — means that even an energy shock may paradoxically accelerate global demand for Chinese renewables. The inquiries from European, Indian, and East African buyers for Chinese solar and battery products have, by multiple accounts, increased since the Hormuz crisis began. China’s industrial policy may be generating the very demand for its products that punitive Western tariffs were meant to suppress.

But a 2.5% export growth print in March, when 21.8% was recorded just eight weeks earlier, is not a blip. It is a warning shot. Beijing is learning, in real time, that the architecture of trade coercion it has spent years constructing is most powerful when global commerce flows smoothly — and most exposed when it doesn’t. The Middle East has handed China a mirror, and the reflection is more complicated than Beijing’s trade strategists expected.

Policy Recommendations

For Western Governments:

- Accelerate critical mineral processing capacity at home and among allies, with binding investment timelines, not aspirational targets

- Formalise a “critical minerals club” with democratic partners, pooling demand guarantees and political risk insurance for new refining projects

- Extend strategic mineral stockpiles to cover at minimum 180-day supply disruption scenarios, spanning not just rare earths but tungsten, antimony, and silver

- Develop coordinated shipping insurance backstops for Gulf routes, to prevent maritime insurance crises from becoming de facto trade embargoes against friendly nations

For Businesses:

- Map your top-tier supplier exposure to China’s whitelist-controlled materials now, not after the next licensing shock

- Secure general-purpose export licenses during the current MOFCOM suspension window — it closes in November 2026

- Build geographic diversification into sourcing: Australia, Canada, South Africa, and Kazakhstan all offer partial alternatives for minerals currently dominated by Chinese supply

- Model your supply chain for a scenario in which MOFCOM controls return at full strength in December 2026 — because that scenario has a realistic probability

The cranes at Yangshan will keep moving. But the world they are loading containers for is no longer the one that made them so indispensable in the first place.

Discover more from The Economy

Subscribe to get the latest posts sent to your email.

Opinion

Oil Prices Soar Above $100 a Barrel. This Time, the World Changes With Them.

Live Prices — April 13, 2026

| Benchmark | Price | Change |

|---|---|---|

| Brent Crude | $102.80 | ▲ +7.98% |

| WTI | $104.88 | ▲ +8.61% |

| U.S. Gas (avg) | $4.12/gal | ▲ +38% since Feb. |

| Hormuz Traffic | 17 ships/day | ▼ vs. 130 pre-war |

As Brent crude clears $102 and WTI tops $104 in a single Monday session, the U.S. Navy prepares to blockade Iranian ports and a fragile ceasefire teeters on collapse. This is not a price spike. It is a civilisational stress test — and the global economy is failing it.

On the morning of April 13, 2026, the global economy received a message written in the price of crude oil. WTI futures for May delivery vaulted nearly 8% to $104.04 a barrel while Brent, the international benchmark, rose above $102 — the third time in six weeks that oil prices have soared above $100 a barrel. The catalyst was grimly familiar by now: the collapse of U.S.-Iran peace negotiations in Islamabad and President Donald Trump’s announcement that the U.S. Navy would begin blockading all maritime traffic entering or leaving Iranian ports, effective 10 a.m. Eastern Time. It was an extraordinary escalation. It was also, in many ways, entirely predictable.

What is not predictable — what no model, no spreadsheet, and no geopolitical risk matrix has successfully priced — is how long this goes on, how far it spreads, and what kind of global economy emerges on the other side. This is not just another oil price spike. The 1973 Arab oil embargo, the 1979 Iranian Revolution, the Gulf War shocks of 1990: historians will one day place the 2026 Hormuz Crisis in the same catalogue of civilisational economic ruptures. The difference is that this time, the chokepoint has not just been threatened — it has been functionally closed for six weeks, and the world’s largest naval power is now formally blockading it from both ends.

KEY FIGURES

- +55% — Brent crude rise since the Iran war began on Feb. 28, 2026

- 17 — Ships transiting Hormuz on Saturday, vs. 130+ daily pre-war

- $119 — Brent peak reached in early April 2026

- 30% — Goldman Sachs-estimated U.S. recession probability, up from 20%

The Anatomy of the Largest Oil Supply Disruption in History

The numbers are almost surreal in their severity. Before the U.S.-Israeli strikes on Iran began on February 28, the Strait of Hormuz — a 21-mile-wide channel between Iran and Oman — handled roughly 25% of the world’s seaborne oil and 20% of its LNG. More than 130 vessels transited daily. That flow has been reduced to a trickle. On Saturday, April 12, only 17 ships made the passage, according to maritime analytics firm Windward. The International Energy Agency has called the current disruption the largest supply shock in the history of the global oil market — a statement it does not make lightly. Production losses in the Middle East have been running at roughly 11 million barrels per day, with Goldman Sachs analysts warning they could peak at 17 million before any recovery begins.

Iran has not simply blockaded the strait — it has monetised it. Tehran began charging tolls of up to $2 million per ship for passage, a sovereign toll road carved from one of humanity’s most critical energy arteries. Oil industry executives have been lobbying Washington frantically to reject any deal that concedes Iran’s de facto control of the waterway. The Revolutionary Guards have warned that military vessels approaching the strait will be “dealt with harshly and decisively.” Iran’s Supreme Leader advisor Ali Akbar Velayati put it bluntly: the “key to the Strait of Hormuz” remains in Tehran’s hands.

And then came Sunday. After marathon talks in Islamabad collapsed — Vice President JD Vance citing Iran’s failure to provide “an affirmative commitment” to forgo nuclear weapons — President Trump posted to social media announcing a full naval blockade of Iranian ports. U.S. Central Command clarified the scope: all vessels from all nations, entering or leaving Iranian ports on the Arabian Gulf and Gulf of Oman, would be interdicted beginning Monday morning. Markets, already frayed, buckled immediately.

“Transit through the Strait of Hormuz remains restricted, coordinated, and selectively enforced. There has been no return to open commercial navigation.”

— Windward Maritime Intelligence, April 2026

Why Oil Prices Above $100 a Barrel Are Different This Time

Context, always context. When Brent crossed $100 in 2008, it was on the back of a commodity supercycle and voracious pre-crisis demand. When it briefly touched triple digits again in 2011 and 2022, those spikes were bounded by recoverable circumstances — Libyan disruption here, Russian invasion there. What defines the current oil price surge in 2026 is the combination of three factors that have never simultaneously aligned in the modern era: a total physical closure of the world’s most critical maritime chokepoint, an active military confrontation between the United States and Iran, and a global economy already weakened by years of tightening monetary policy and tariff escalation.

The physical-versus-paper market divergence alone should unnerve policymakers. While Brent futures trade around $102 this morning, physical crude barrels for immediate delivery have been trading at record premiums of approximately $150 a barrel in some grades. That is not a market in orderly price discovery. That is a market screaming that actual oil — the kind you put in a tanker, refine, and burn — is becoming genuinely scarce in ways that paper futures cannot fully capture.

Major Oil Supply Shocks: A Historical Comparison

| Event | Year | Peak Price Surge | Duration | % of Global Supply Affected |

|---|---|---|---|---|

| Arab Oil Embargo | 1973 | ~+400% (over 12 months) | ~5 months | ~7–9% |

| Iranian Revolution | 1979 | ~+150% | ~12 months | ~4% |

| Gulf War (Kuwait invasion) | 1990 | ~+130% | ~6 months | ~5% |

| Russia-Ukraine War | 2022 | ~+80% (Brent peak ~$139) | ~4 months peak | ~8–10% |

| 2026 Hormuz Crisis | 2026 | +55% in 6 weeks; Brent from $70 → $119 peak | Ongoing | ~20%+ (Hormuz total) |

The Economic Impact of Oil Over $100: A Global Reckoning

The cascade effects of sustained oil prices above $100 a barrel are no longer theoretical. They are unfolding in real time, and the transmission mechanisms differ sharply by geography.

The United States: Inflation, the Fed, and the $4-a-Gallon Problem

American motorists are paying an average of $4.12 per gallon at the pump — up 38% since the war began in late February. For a country where gasoline pricing is a leading indicator of presidential approval ratings, this creates an acute political problem for an administration that launched the military campaign in the first place. Goldman Sachs has raised its 12-month U.S. recession probability to 30%, up from 20% before the conflict began, and elevated its 2026 inflation forecast to roughly 3% — a figure that would make the Federal Reserve’s dual mandate look increasingly unachievable. The Fed now faces its least comfortable scenario: a supply-driven inflationary shock paired with slowing growth, a stagflationary bind that rate tools are poorly designed to address.

Europe: An Energy Crisis Stacked on an Energy Crisis

For Europe, the timing could scarcely be worse. The continent entered 2026 with gas storage at roughly 30% capacity following a harsh winter, and its dependence on Qatari LNG — which transits Hormuz — has proved a fatal vulnerability. Dutch TTF gas benchmarks nearly doubled to over €60/MWh by mid-March, while the European Central Bank postponed its planned rate reductions on March 19, raising its inflation forecast and cutting GDP projections simultaneously. The ECB now warns of stagflation for energy-dependent economies; UK inflation is expected to breach 5% this year. Germany and Italy — the continent’s industrial engines — face the real possibility of technical recession by year-end, with chemical and steel manufacturers already imposing surcharges of up to 30% on industrial customers.

Asia: The Quiet Crisis

Asia’s exposure is less discussed but arguably more profound. In 2024, an estimated 84% of crude flowing through Hormuz was destined for Asian markets. China, which receives a third of its oil via the strait, has been accumulating reserves and strategically holding its hand — but even a billion barrels of reserve buys only a few months of supply at normal consumption rates. India has dispatched destroyers to escort tankers, launching Operation Sankalp to evacuate Indian-flagged LPG carriers from the Gulf of Oman. Japan and South Korea, overwhelmingly dependent on Middle Eastern crude, have activated emergency reserve release programs. The ASEAN economies are, in the IMF’s language, experiencing a severe “terms-of-trade shock” that is accelerating currency depreciation and eroding import capacity across the region simultaneously.

Goldman Sachs and the Anatomy of a $120 Scenario

No institution has been more forensic in its scenario modelling than Goldman Sachs, and its language has grown progressively more alarming. In a note carried by Bloomberg last Thursday, Goldman warned that if the Strait of Hormuz remains mostly shut for another month, Brent would average above $100 per barrel for the remainder of 2026 — with Q3 averaging $120 and Q4 at $115. The bank’s lead commodity analyst Daan Struyven described the situation as “fluid,” which, in the measured language of Wall Street research, reads as genuinely alarming.

Wood Mackenzie’s analysis is blunter still: if Brent averages $100 per barrel in 2026, global economic growth slows to 1.7%, down from the pre-war forecast of 2.5%. At $200 oil — a figure that was science fiction six weeks ago and is now a tail risk in Barclays’ scenario models — global recession becomes mathematically inevitable, with the world economy contracting by approximately 0.5%. The most chilling detail in the Goldman note is the observation that even after the Strait reopens, oil prices will not fall quickly back to pre-war levels. The shock has forced markets to permanently reprice the geopolitical risk premium embedded in Persian Gulf production concentration. That repricing is already baked into long-dated oil forwards.

“If a resolution to the war proves unachievable, we expect Brent to trade upwards again, with higher prices and demand destruction ultimately balancing the market.”

— Wood Mackenzie Energy Analysts, April 2026

The Geopolitical Oil Crisis: Strait of Hormuz as the New Berlin Wall

There is a structural argument buried beneath the daily price moves that deserves serious attention, because it will outlast whatever ceasefire or deal eventually materialises. The Strait of Hormuz has always been the world’s single greatest energy chokepoint — a geographic accident that turned a narrow Persian Gulf passage into the jugular vein of the global industrial economy. What the 2026 crisis has done is demonstrate, for the first time at full operational scale, exactly how catastrophic its closure actually is. Energy planners and policymakers have long known this intellectually. They now know it viscerally, with $4-a-gallon gasoline and rationing notices.

The strategic consequences will be generational. Every major oil-importing nation is now conducting emergency reviews of its energy supply diversification posture. The U.S. shale industry — constrained in the near term to roughly 1.5 million additional barrels per day — will receive a decade of investment incentives. Saudi Arabia and the UAE, which have limited alternative pipeline capacity via Yanbu and Fujairah respectively (a combined ceiling of roughly 9 million barrels per day against Hormuz’s normal 20 million), will face enormous pressure to expand redundant infrastructure. The energy transition, already turbocharged by post-pandemic economics, now has a third accelerant: geopolitical necessity. When a single authoritarian government can threaten to collapse the global economy by closing a 21-mile strait, the case for renewable energy independence ceases to be an environmental argument. It becomes a national security imperative.

What Comes Next: Three Scenarios for the Oil Price Outlook

Markets are, at their core, probability machines. And right now, the probability distributions on oil price scenarios have never been wider or more consequential. Three plausible trajectories present themselves.

Scenario 1 — Negotiated resolution (base case, narrowing): The blockade and counter-blockade create sufficient economic pain on both sides — Iranian export revenues collapse while U.S. domestic inflation becomes a serious political liability — to force a resumption of talks. A deal that includes Iranian nuclear concessions and a Hormuz reopening could see Brent retreat toward $80–$85 by year-end, consistent with Goldman’s conditional base case. The window for this scenario is closing fast.

Scenario 2 — Frozen stalemate (elevated probability): The ceasefire technically holds but the Strait remains in Iran’s supervised pause — open to some nations, closed to others, with tolls, IRGC escorts, and constant threat of escalation. Oil prices trade in a $95–$115 range for the remainder of the year. Global growth slows to around 2%, the Fed and ECB remain paralysed between inflation and recession. This is the slow bleed scenario, and arguably the most likely.

Scenario 3 — Escalation (tail risk, but priced insufficiently): Limited U.S. strikes on Iran, which the Wall Street Journal reported Trump is actively considering, trigger Iranian retaliation against Gulf production infrastructure. Brent tests $150 or higher. Global recession is not a tail risk — it is a base case. The physical crude market, already pricing some grades at $150, would simply catch up to what it already knows.

A Final Word on What $100 Oil Actually Means

There is a tendency in financial commentary to treat $100-a-barrel oil as a number — a round, symbolic threshold that triggers algorithmic reactions and attention-grabbing headlines. But it is worth sitting with what it actually represents. Every barrel of oil that costs $104 instead of $70 is a transfer of wealth from oil-importing nations — from the factories of Germany, the commuters of Manila, the farmers of Brazil who depend on Hormuz-transited fertilizers — to a geopolitical conflict that most of the world’s population did not choose and cannot control.

The IEA has called this the largest oil supply disruption in the history of the global market. That distinction matters. Every previous shock eventually resolved — through diplomacy, demand destruction, technological substitution, or simple exhaustion. This one will too. But the world that emerges from the 2026 Hormuz crisis will be structurally different from the one that entered it: more fragmented in its energy supply chains, more accelerated in its renewable transition, more alert to the terrifying leverage embedded in a 21-mile waterway that sits entirely within Iranian territorial reach.

When they write the history of how the world finally, truly moved beyond its dependence on Middle Eastern oil, the chapter title may well be: April 2026.

Discover more from The Economy

Subscribe to get the latest posts sent to your email.

Analysis

Alabama Is Powering Its Startup Boom Through Community and Investment

The Alabama startup boom is not an accident. It is not a fluke of geography, a windfall from a single anchor tenant, or the kind of frothy exuberance that tends to inflate and collapse in coastal corridors. It is, instead, the deliberate consequence of a deceptively simple idea: that founders, not capital, should sit at the center of an innovation ecosystem—and that when a state wraps itself around its entrepreneurs rather than the other way around, extraordinary things happen.

In two decades covering regional innovation from Tel Aviv to Tallinn and from Nairobi to Nashville, I have rarely encountered a model as coherent—or as replicable—as the one quietly assembling itself across Alabama. As U.S. venture capital continues its uneven recovery (the Q4 2025 PitchBook-NVCA Venture Monitor describes a market where “deal counts rose, multiple high-profile IPOs dominated headlines, and AI attracted a record amount of capital,” yet half of all venture dollars flowed into just 0.05% of deals), the geography of opportunity is shifting in ways most investors have not yet fully priced. Alabama is ahead of that curve.

1. Why a Founder-First Ecosystem Is Alabama’s Secret Weapon

The phrase “founder-first” is overused in startup circles. It tends to mean little beyond a firm’s marketing deck. In Alabama, it describes operational reality.

The Economic Development Partnership of Alabama (EDPA) anchors this philosophy through Alabama Launchpad, a program that has invested more than $6 million in early-stage companies—a portfolio now valued collectively at $1 billion. That’s a return profile that would turn heads in any fund memo. But the numbers alone miss the point. What Alabama Launchpad offers that Sand Hill Road cannot is proximity—a white-glove approach to connecting founders with the right resource at the right inflection point, rather than a transactional relationship governed by ownership percentages.

“We want to offer our founders white-glove service when it comes to connecting you with the resources that are right for you and your team at that time,” said Audrey Hodges, director of communications and talent at the EDPA, at the 2025 Inc. 5000 Conference & Gala in Phoenix.

This sounds simple. It is, in fact, quite rare. The Kauffman Foundation has long documented the friction that kills promising startups—not market failure, but navigational failure: the inability to find the right mentor, the right loan program, the right workforce development partner at the critical moment. Alabama has engineered its ecosystem explicitly to eliminate that friction.

The result is a startup environment that punches well above its weight class. Birmingham’s Innovation Depot, the Southeast’s largest tech incubator, provides the physical and institutional scaffolding. Auburn University’s New Venture Accelerator has launched more than 50 businesses that have attracted over $47 million in venture investment and created more than 370 jobs. The University of Alabama’s EDGE incubator anchors Tuscaloosa. And HudsonAlpha Institute for Biotechnology in Huntsville is spinning out life-science ventures at a pace that would surprise most biotech observers outside the Southeast.

Together, these nodes form what urban economists call a “distributed innovation geography”—a web of hubs rather than a single megalopolis. It is, not coincidentally, exactly the structure that the Brookings Institution has advocated as the most resilient model for regional innovation growth.

2. How Alabama Is Closing the Capital Gap—and Making It Stick

Identifying the problem is easy. Alabama’s startup funding landscape faced a structural deficit that is common to nearly every non-coastal state: a shallow pool of local venture capital, reluctant institutional investors, and the persistent gravitational pull of San Francisco and New York on promising founders and their companies.

The solution Alabama chose is, I would argue, one of the most architecturally sophisticated public-private capital strategies in the United States today.

At its core sits Innovate Alabama—the state’s first public-private partnership expressly focused on growing the innovation economy. Funded through a U.S. Department of the Treasury award of up to $98 million via the State Small Business Credit Initiative (SSBCI), Innovate Alabama has constructed a multi-layered capital stack: the LendAL program extends credit to small businesses through private-lending partnerships; InvestAL provides high-match equity investments both directly into startups and through trusted local venture funds; and a network of supplemental grants, tax incentives, and accelerator partnerships rounds out the toolkit.

What makes this architecture genuinely distinctive is not the instruments themselves—development finance has existed for decades—but the conditions attached to the capital. Charlie Pond, executive director of Alabama SSBCI at Innovate Alabama, is explicit: “We built that into our agreement with Halogen Ventures and other funds—that the money has to go to Alabama companies.” The vision, he adds, is generational: “This isn’t a one-time $98 million into the ecosystem and then we’re done. We want this to be around for a long time.”

This structural insistence that returns stay in Alabama—recycling capital back into the ecosystem rather than flowing to coastal LPs—is precisely the mechanism that differentiates Alabama’s model from the well-intentioned but often extractive pattern of outside capital flowing briefly through secondary markets before departing.

Innovate Alabama has already made 17 direct investments under the InvestAL program, with companies ranging from biotech and life sciences to AgTech and professional services. Through partnerships with gener8tor Alabama and Measured Capital—two VC firms with deep local roots and a mandate to reinvest in-state—the program is deploying a fund-of-funds strategy designed to build durable capital density. To date, 179 Alabama startups have graduated from gener8tor programs, securing nearly $80 million in follow-on funding.

In June 2025, Innovate Alabama went further still: it launched the Venture Studio and Fund in partnership with Harmony Venture Labs, a Birmingham-based company that supports new enterprises. The studio begins not with capital but with problems—industry challenges identified through deep fieldwork, then matched with founders and early investment. The Innovate Alabama Venture Studio and Fund aims to launch 10 new companies and attract $10 million in venture capital by 2028 and hopes to generate millions in economic impact across the state.

Compare this to what the NVCA’s 2025 Yearbook documents at the national level: median fund size outside California, New York, and Massachusetts was just $10 million—less than half the overall U.S. median of $21.3 million. Despite the substantial dry powder available, with $307.8 billion in capital ready to be deployed, investors have been holding off due to market uncertainty. Alabama is not waiting for that capital to find its way south on its own. It is building the infrastructure to attract, generate, and retain it locally.

3. The SmartWiz Test: Why Alabama Founders Are Choosing to Stay

No story captures the Alabama startup model more vividly—or more movingly—than SmartWiz.

Five Auburn University students, bonded through fraternity life and a shared frustration with the misery of tax preparation, spent years building a platform that compresses a four-hour tax return process into roughly 20 minutes. They are Tevin Harrell, Olumuyiwa Aladebumoye, Jordan Ward, Justin Robinson, and Bria Johnson—a team of tech entrepreneurs and tax professionals who founded SmartWiz in 2021 in Birmingham and have quickly emerged as one of only 16 IRS-approved tax software providers worldwide.

Their journey through Alabama’s ecosystem reads like a case study in coordinated public-private support: $50,000 in early seed funding through the Alabama Launchpad program; $500,000 from Innovate Alabama’s SSBCI; and additional investments from Techstars Los Angeles, Google, and entertainer Pharrell Williams.

Then came the test. The company’s commitment to Birmingham was tested when it was offered the opportunity to relocate to Los Angeles with $3 million in funding for its latest investment round, but SmartWiz chose to remain and expand in Alabama.

“We respectfully turned down that $3 million and came back to Alabama,” COO Aladebumoye said at the Inc. 5000 panel. “That’s where we ran into the SSBCI grant.” The grant helped close the seed round on Alabama’s terms.

The decision was not sentimental. It was strategic. Alabama’s workforce development agency AIDT is providing services valued at $780,000 to support SmartWiz’s expansion, and the City of Birmingham and Jefferson County are providing local job-creation incentives totaling a combined $231,000. SmartWiz plans to create 66 new jobs over the next five years, with an average annual salary of $81,136, and the growth project is projected to have an economic impact of $9.6 million over the next 20 years.

Harrell’s framing of this choice cuts to the heart of Alabama’s competitive proposition: “As a business owner, people are your biggest investment.” What Alabama offers, in his telling, is not just cheaper real estate or lower burn rates—though both matter—but a community of support that a relocated startup in Los Angeles could not replicate at any price.

This is what I would call the SmartWiz Test: when a founder turns down three times their current raise to stay in your ecosystem, you have built something real.

4. Talent, Training, and the Infrastructure of Retention

Founder retention is the Achilles heel of every emerging startup ecosystem. Build a great company in Memphis or Montgomery and the conventional wisdom says that as soon as you raise a serious round, you will relocate to be near your investors, your acqui-hire targets, and your talent pool. Alabama is systematically dismantling that logic.

The Alabama Industrial Development Training (AIDT) program—operating through the Department of Commerce—offers startup founders customized recruitment and training support tied directly to job-creation milestones. Unlike generic workforce programs, AIDT works with each company to identify the specific skill sets its workforce will need as it scales. It is, in effect, a bespoke talent pipeline that adjusts to the startup’s roadmap rather than forcing the startup to adjust to the market.

Innovate Alabama’s Talent Pilot Program extends this model by funding bold, scalable solutions to Alabama’s broader workforce challenge—paid internships, STEM acceleration, and work-based learning programs designed to keep the state’s best graduates in-state.

The effects are measurable. Birmingham was designated one of 31 federal Tech Hubs—the only city in the Southeast to receive the distinction—positioning it for substantial federal investment in innovation infrastructure. HudsonAlpha has made Huntsville a nationally recognized node in the biotech talent network. Auburn and the University of Alabama together generate a pipeline of engineering and business graduates increasingly likely, because of programs like Alabama Launchpad, to start companies at home rather than migrate to coastal markets.

The Brookings Institution’s research on growth centers makes this point with precision: talent retention is not primarily a question of amenities or wages. It is a question of opportunity density—the number of high-quality, high-growth companies and institutions concentrated in a geography. Alabama is deliberately thickening that density.

5. A Global Blueprint: What Alabama Can Teach the World

In covering innovation ecosystems across four continents, I keep returning to a structural insight that Alabama is proving with empirical force: the most resilient startup ecosystems are not the largest or the best-capitalized. They are the most coherent—the ones where state policy, private capital, university research, incubation infrastructure, and founder community all pull in the same direction at the same time.

Israel’s famed startup ecosystem—often held up as the gold standard for a small geography punching above its weight—succeeded not because Israeli venture capital was particularly sophisticated in the early years, but because of deliberate public-private coordination, military-derived talent pipelines, and a cultural insistence that founders stay and build at home. The Yozma program, launched in 1993, used a government fund-of-funds to catalyze private VC—exactly the structural logic behind Alabama’s InvestAL. Alabama is, in important respects, attempting something analogous: using public capital not to replace private investment but to de-risk and attract it.

Estonia’s digital transformation—a country of 1.3 million people that became a global model for e-governance and startup density—succeeded through the same coordinated coherence, not through the sheer volume of capital. Rwanda’s innovation push in Kigali, East Africa’s most deliberate attempt to build a technology economy from the top down, draws the same lesson: intentionality and ecosystem design matter more than proximity to existing capital pools.

What Alabama has that many of these comparators lacked in their early stages is something harder to engineer: community. The panel at the Inc. 5000 conference kept returning to this word, and it deserves examination. Community, in the Alabama startup context, means something specific: a network of founders, investors, educators, and state officials who know each other, refer to each other, and take responsibility for each other’s success. It is the opposite of the anonymous, transaction-driven culture of Silicon Valley at scale.

“The barrier to entry to succeed in Alabama,” as one panelist put it at the Inc. 5000 conference, “is just your willingness to hustle.” That framing deserves to be taken seriously. In San Francisco, the barrier to entry is, increasingly, a warm introduction to a partner at a top-decile firm, a Stanford pedigree, and the financial runway to survive eighteen months without a paycheck. Alabama’s model—meritocratic, community-anchored, and deliberately inclusive—is not only more equitable. It may, over time, prove more durable.

SmartWiz was founded by five Black entrepreneurs from Auburn. They were backed by Pharrell Williams’ Black Ambition Prize, the Google for Startups Black Founders Fund, and a state ecosystem that met them where they were rather than requiring them to relocate to access capital. That is not incidental to Alabama’s model. It is central to it.

6. The 2026 Moment: Why Now Matters

U.S. venture capital is at a genuine inflection point. As 2026 begins, optimism is cautiously returning—the IPO window has begun to open, secondaries have gained acceptance as a critical liquidity outlet, and early-stage investing is regaining strength. The concentration problem that has plagued the market—half of all venture dollars went into just 0.05% of deals in 2025—creates a structural opening for ecosystems that have been building patiently, without depending on the mega-rounds that define and distort coastal markets.

Alabama has been building exactly that. Its $98 million SSBCI deployment is not finished. Its Venture Studio has barely begun. Its pipeline of university-trained founders is expanding. And critically, its brand as a founder-friendly ecosystem is gaining the kind of national visibility—through the Inc. 5000 stage, through SmartWiz’s headline-making story, through Innovate Alabama’s increasingly sophisticated capital architecture—that attracts the next wave of entrepreneurs and investors.

The Innovate Alabama Venture Studio’s goal of launching 10 new companies and attracting $10 million in venture capital by 2028 is modest by coastal standards. It is transformative by the standards of what secondary markets have historically been able to achieve. And if Innovate Alabama’s track record holds—if the $6 million invested through Alabama Launchpad continues to compound toward and beyond its current $1 billion portfolio valuation—the returns will be impossible to ignore.

There is a moment in the development of every successful regional ecosystem when it tips from “interesting experiment” to “self-reinforcing flywheel.” The exits create the angels. The angels fund the next cohort. The wins attract talent. The talent attracts the next round of capital. Observers who watched Austin in 2010 or Miami in 2019 know this pattern well. Alabama, in 2026, looks poised for exactly that transition.

Opinion: Alabama Is Writing the Next Chapter of American Innovation

The coastal consensus in American venture capital holds, implicitly if not always explicitly, that innovation is a product of density—of the accidental collisions that happen when enough smart, ambitious people are crammed into San Francisco or Manhattan. There is truth in this. There is also, increasingly, evidence that it is incomplete.

Density without coherence produces exclusion. It produces the housing crisis that is bleeding talent out of San Francisco. It produces the founder burnout that has come to define the “move fast and break things” generation. It produces ecosystems that are brilliant at the top and fragile everywhere else.

Alabama is demonstrating an alternative. Not a rejection of density, but a designed coherence—a deliberate alignment of capital, community, training, policy, and founder support that creates the conditions for high-growth companies to start, scale, and stay. The fact that Alabama can offer this while also offering a cost structure that extends a startup’s runway by twelve to eighteen months compared to the Bay Area is not a side benefit. It is a competitive advantage of the first order.

For policymakers in secondary markets from the American Midwest to Southeast Asia, Alabama’s model contains a clear set of replicable principles: anchor public capital to local returns; build incubation infrastructure before trying to attract outside investors; treat founders as the customer of the ecosystem rather than as the raw material; invest relentlessly in talent retention; and understand that community is not a soft amenity—it is the operating system on which everything else runs.

The future of American innovation does not belong exclusively to Silicon Valley. It belongs to the places that figure out, as Alabama is figuring out, that the best investment a region can make is not in a single unicorn but in the conditions that make unicorns possible—and that make founders choose to stay and build them at home.

The magic of Alabama, ultimately, is not in the dollar amounts or the portfolio valuations, impressive as they are. It is in a group of five Auburn graduates turning down a $3 million check to fly back home to Birmingham, walk into Innovation Depot, and build something the world has not seen before.

That is what a real startup ecosystem looks like. And the rest of the country—and the world—should be paying attention.

Discover more from The Economy

Subscribe to get the latest posts sent to your email.

-

Markets & Finance3 months ago

Markets & Finance3 months agoTop 15 Stocks for Investment in 2026 in PSX: Your Complete Guide to Pakistan’s Best Investment Opportunities

-

Analysis2 months ago

Analysis2 months agoBrazil’s Rare Earth Race: US, EU, and China Compete for Critical Minerals as Tensions Rise

-

Banks3 months ago

Banks3 months agoBest Investments in Pakistan 2026: Top 10 Low-Price Shares and Long-Term Picks for the PSX

-

Analysis2 months ago

Analysis2 months agoTop 10 Stocks for Investment in PSX for Quick Returns in 2026

-

Investment3 months ago

Investment3 months agoTop 10 Mutual Fund Managers in Pakistan for Investment in 2026: A Comprehensive Guide for Optimal Returns

-

Global Economy4 months ago

Global Economy4 months agoPakistan’s Export Goldmine: 10 Game-Changing Markets Where Pakistani Businesses Are Winning Big in 2025

-

Asia3 months ago

Asia3 months agoChina’s 50% Domestic Equipment Rule: The Semiconductor Mandate Reshaping Global Tech

-

Global Economy4 months ago

Global Economy4 months ago15 Most Lucrative Sectors for Investment in Pakistan: A 2025 Data-Driven Analysis